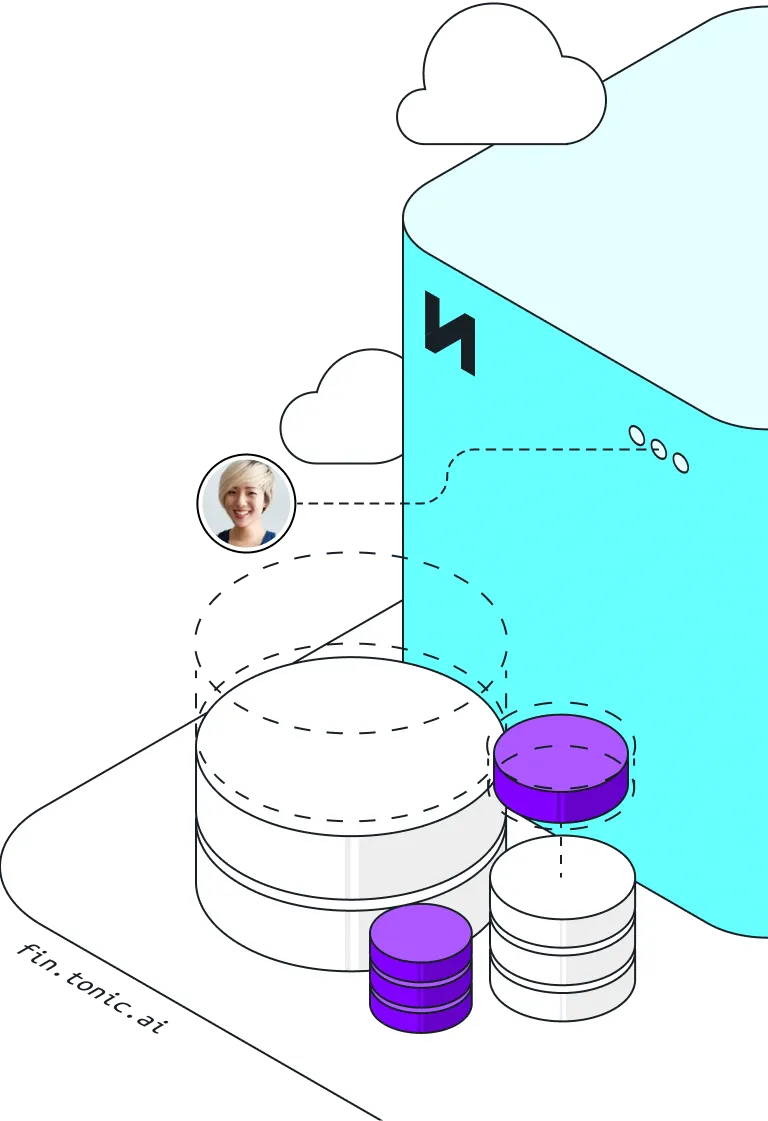

The all-in-one synthetic test data platform for developers

Tonic Structural is a test data management solution for complex structured, semi-structured, and document-based data

Features

Accelerate development, testing, and QA to shorten your sprints and get your products to market faster.

Enable shift-left testing and development with better data for earlier bug detection and product optimization.

Eliminate resource-heavy workarounds and reduce infrastructure costs by streamlining DevOps test data management.

Ensure regulatory compliance and reduce risk in your lower environments by enforcing security policies within test data generation.

Unblock off-shore teams and maximize your resources with synthetic test data that is safe, useful, and accessible.

Test data applications for development and QA workflows

Tonic integrates with every leading database

Tonic Structural supports leading databases, like MySQL, PostgreSQL, SQL Server, and Oracle, offering seamless synthetic data generation and management for all your development and testing needs.

.svg)

.svg)

.png)