The problem

In regulated industries like healthcare, fine-tuning Large Language Models (LLMs) on internal data is limited by strict rules around personally identifiable information (PII) and protected health information (PHI). Although this sensitive data is essential for improving model performance, regulatory and privacy risks make it difficult to use.

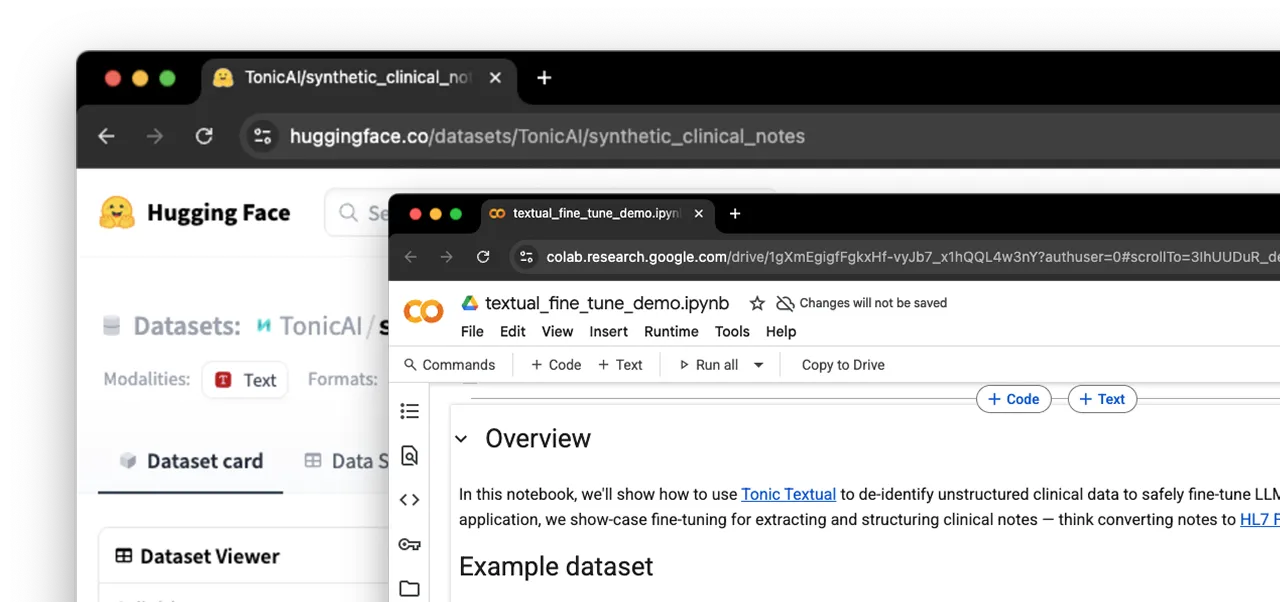

For example, suppose unstructured medical notes need to be fine-tuned into models that produce structured outputs following the HL7 FHIR standard, a vital task since most clinical data is unstructured. However, fine-tuning on this data risks HIPAA violations and the model memorizing and unintentionally revealing sensitive patient details. This is especially challenging because PHI like names and birthdates is necessary for the task but also highly regulated.

.svg)

.svg)