The lastest

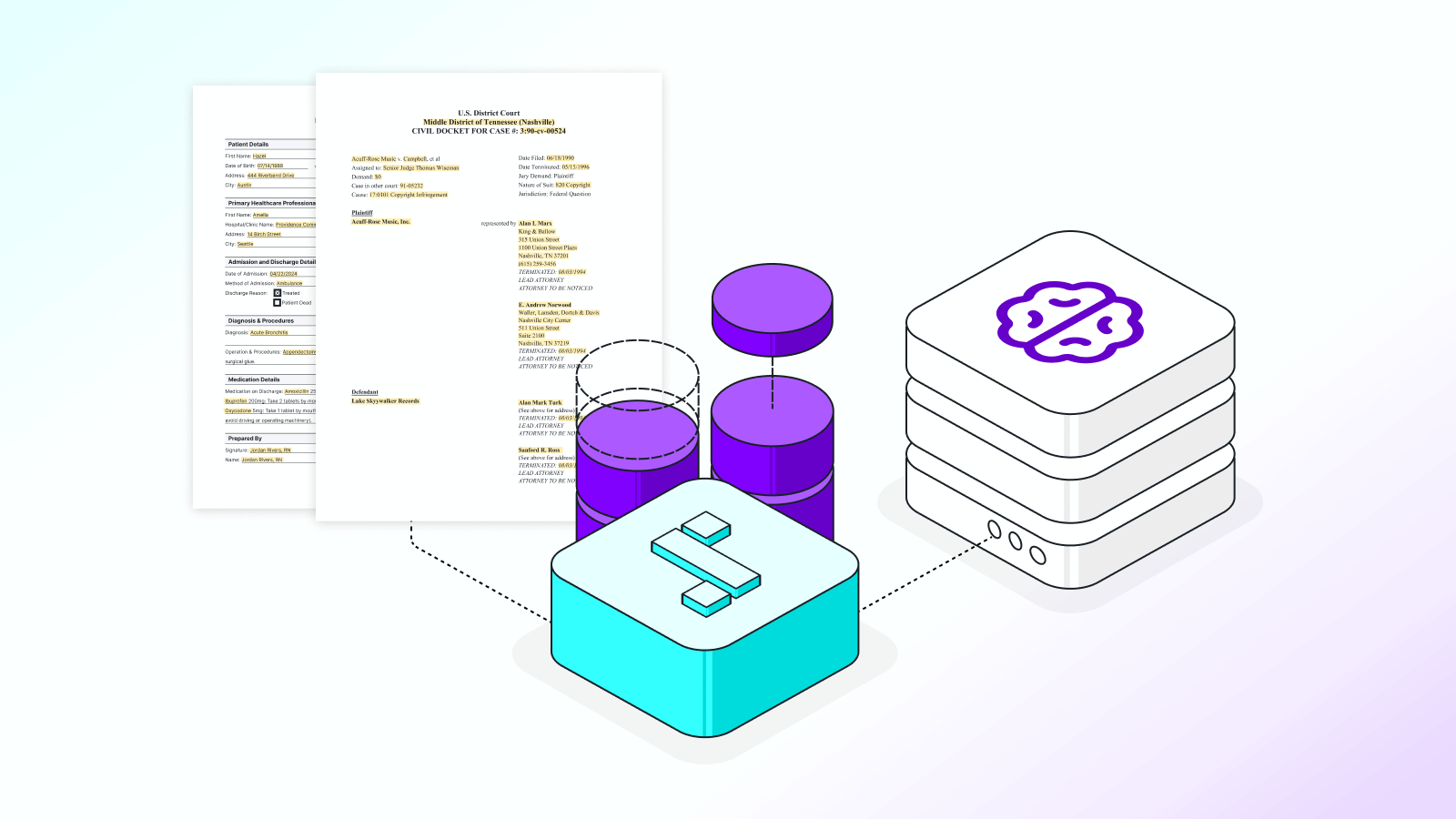

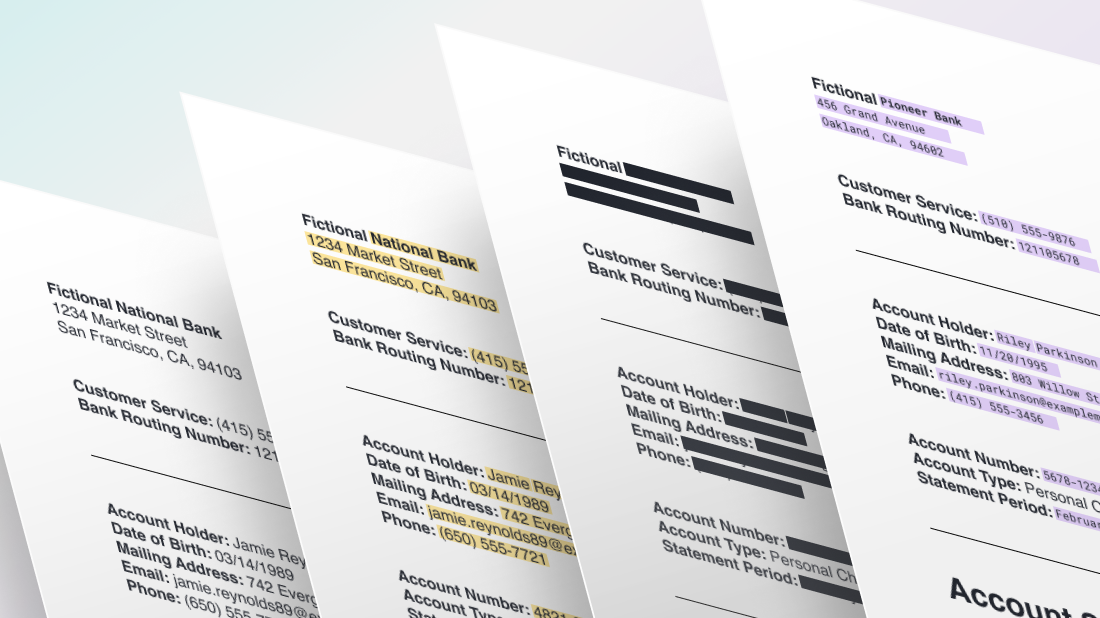

Benchmarking OpenAI's Privacy Filter: What it gets right, and where PII detection still needs real data

Benchmarking OpenAI’s Privacy Filter on real-world data, analyzing PII detection performance, recall gaps, and how fine-tuning improves results across domains.

.svg)

.svg)

.png)