Log in

Product updates

June ‘21 product update: support for all the databases

At Tonic, we’ve been mimicking structured data since day 1. But let’s face it: not all data belongs in columns and rows. Which is why we’re very excited to announce our newest database integration: Tonic can now mimic your document-based data in MongoDB.

This latest integration is joined by a full parade of additional databases Tonic now natively connects with, including Amazon Redshift, Databricks, BigQuery, Spark on Amazon EMR, and Db2. The list represents an expansion of our technology into the realm of data warehouses. We’re enabling companies to securely de-identify their sensitive data the moment it comes into their database, for long-term, compliant storage, without running up against the limits dictated by privacy regulations.

From Postgres to Redshift to MongoDB, our customers work in multi-database environments, and our technology equips them to work across those databases, regardless of the type, to create a true mimic of their entire data ecosystem.

Let’s talk details.

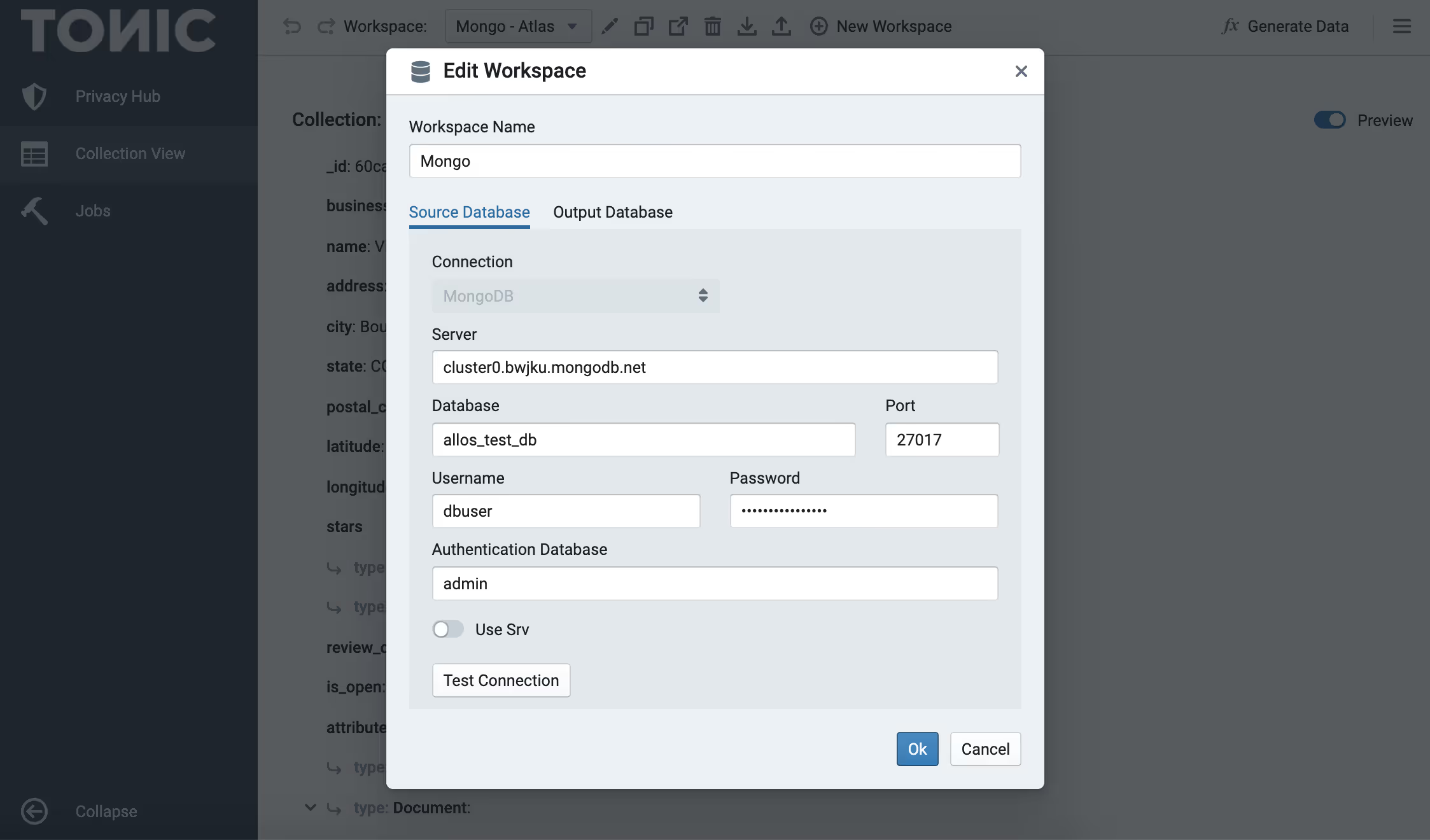

MongoDB: Documents De-identified

Customer profiles, webforms, financial transactions, medical records—so much sensitive data is stored in Mongo’s document-oriented databases. De-identifying it is not an easy task. Here’s a quick look at the challenges and how we solve for each:

Unpredictable semi-structured data

- As a NoSQL database, MongoDB is schema-less. Nested data structures can be arbitrarily complex, the data types might vary even within the same element across documents. Structure is inconsistent and sparse.

- Tonic’s solution: We’ve built a hybrid document model that parallels a relational schema, to capture the data structure’s complexity and carry it over into your lower environments.

Ubiquity of sensitive data

- JSON documents are a common format and are a great representation for many types of data. They require a high degree of granularity to treat each element they contain with a precise strategy to ensure that privacy and utility are both achieved.

- Tonic’s solution: Our growing list of generators employ customized algorithms to transform data according to specific data types or privacy requirements. After applying the appropriate generator, users can preview the masked output directly within the UI.

Connecting natively

- Connecting directly to your MongoDB environment from the same product as your relational data provides you with complete visibility and accessibility across all of your data for end-to-end development and testing.

- Tonic’s solution: Tonic fits into your database architecture by sitting between environments, connecting directly to your production database and to a staging or test database. Our technology allows you to work across database types, whether it's MySQL, Redshift, or Mongo, in a single tool that offers shareable workspaces, an API, and input-to-output consistency across tables and databases.

We’re proud to be leading the industry in offering de-identification of semi-structured data in MongoDB, for which the landscape of available tools is currently very limited.

Data Warehouse Support: Amazon Redshift, Databricks, BigQuery, et al

The market has spoken: big data needs a big warehouse, and we're here to help keep it safe. Demand for data anonymization in Redshift, Databricks, and BigQuery is skyrocketing, thanks to the ever-increasing amounts of data companies need to store and the ever-stricter regulations around how long sensitive data can be kept in a database.

We’ve built database connectors for today’s leading data warehouses to enable organizations to store their data compliantly. And if developers need to access that data for QA and testing, they can do so without infringing on anyone’s privacy, while still getting data they can use. In addition to prioritizing privacy, our tools are designed to ensure data utility, no matter the scale of the dataset you’re working with.

Here are a few of the features in Tonic tailored to the specific needs of de-identifying data stored in data warehouses:

- Primary and Foreign Key Synthesis: Tonic’s primary and foreign key generators allow you to add these constraints to your data model, in the event that they aren’t currently assigned in your database. This is particularly useful for Redshift users, given that Redshift doesn’t enforce PK and FK constraints, which are necessary for preserving referential integrity when generating mock data.

- Minimizing Large Data Footprints: Tonic uses multi-database subsetting to synthesize your data no matter the size of your database. This allows for creating a coherent slice of data across all your databases that preserves referential integrity while shrinking petabytes of data down to gigabytes.

- Proactive Data Protection: Using the privacy scan feature, Tonic eliminates hours of manual work by automatically locating and de-identifying sensitive information (PII/PHI) throughout a database. Tonic also delivers schema change alerts to proactively keep sensitive production data from leaking into lower environments.

Did someone say Db2?

Yes, we did! Not to be overshadowed by NoSQL documents and large-looming warehouses, Db2 is the latest addition to our list of relational database integrations, and we’re excited to support it. We can connect to both Db2 LUW and iSeries, and offer our full suite of tools for each. Similar to with MongoDB, Tonic is one of the few data generation tools available that currently offers a Db2 integration.

The Takeaway

The database-agnostic nature of Tonic and the ability to connect directly to your database, as opposed to a data-upload approach, are two of our key differentiators as compared to other data anonymization and synthesis tools on the market today. Add to this the rare ability to work with document-based data in MongoDB, and we’re excited to be leading the charge in getting developers and data engineers the safe, realistic data they need.

What database are you connecting to? Or better yet, what new database would you like to see? Drop us a line and let us know; it may just make it into our next launch event.

Chiara Colombi is the Director of Product Marketing at Tonic.ai. As one of the company's earliest employees, she has led its content strategy since day one, overseeing the development of all product-related content and virtual events. With two decades of experience in corporate communications, Chiara's career has consistently focused on content creation and product messaging. Fluent in multiple languages, she brings a global perspective to her work and specializes in translating complex technical concepts into clear and accessible information for her audience. Beyond her role at Tonic.ai, she is a published author of several children's books which have been recognized on Amazon Editors’ “Best of the Year” lists.

.svg)

.svg)