Log in

Data privacy compliance for software and AI development

Generate high-quality de-identified data that ensures privacy and utility for software testing and model training to achieve regulatory compliance across your organization without slowing down innovation.

100

%

PII-free test data

8

x

Faster release cycles

4

x

Return on investment

.svg)

Achieve compliance and enable your developers

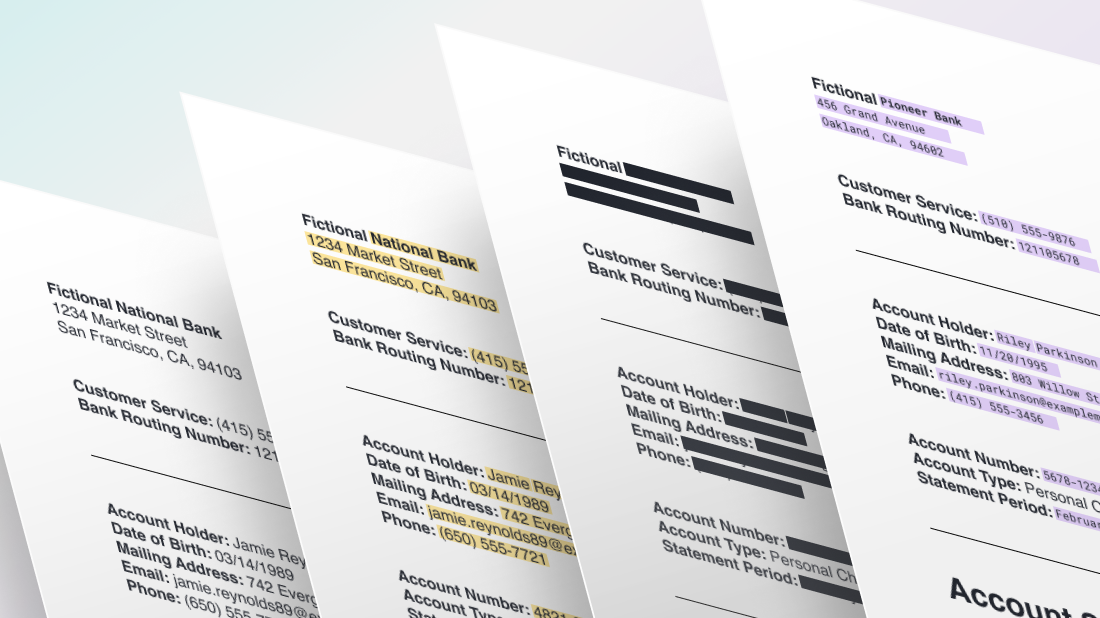

Privacy guarantees

Detect and de-identify PII throughout structured and unstructured data, applying regulatory-specific data transformations to ensure compliance and eliminate risk in development workflows.

Global enablement

Generate realistic data that is safe to share across borders to maximize off-shore resources while meeting the de-identification requirements of international regulations.

Accelerated development

Streamline data governance to eliminate costly bottlenecks, and optimize data readiness to enable shift-left testing and AI innovation.

The data privacy compliance solutions for software and AI engineers

Realistic data masking that preserves privacy and utility

Apply granular data de-identification techniques that securely remove PII from your structured and unstructured data without damaging the data’s utility for software testing or model training.

Multilingual Named Entity Recognition (NER) models

Automatically identify dozens of sensitive entity types in free-text data across your data stores with proprietary, best-in-class multilingual machine learning models for NER.

Compliant data de-identification for global regulations

Leverage targeted data generators and Expert Determination to achieve compliance with global data privacy regulations, including GDPR, CCPA, PCI, and HIPAA.

Built-in data governance tools for visibility and security

Standardize rules for data generation to enforce security policies, and access privacy reports and audit trails with customizable RBAC to monitor and validate adherence to privacy compliance throughout your data pipelines.

The Tonic.ai product suite

Tonic Fabricate

AI-powered synthetic data from scratch and mock APIs

Tonic Structural

Modern test data management with high-fidelity data de-identification

Tonic Textual

Unstructured data redaction and synthesis for AI model training

Frequently asked questions

Tonic.ai helps organizations reduce compliance risk by enabling the safe use of data without exposing sensitive or regulated information. Teams can work with realistic data while maintaining strong privacy controls.

Tonic.ai supports compliance efforts related to GDPR, HIPAA, CCPA, SOC 2, PCI DSS, and internal data governance policies by minimizing the use of real personal and confidential data outside secure production environments.

Uncontrolled access to production data increases audit scope, breach risk, and regulatory exposure. Tonic.ai reduces this surface area by replacing sensitive datasets with privacy safe alternatives for non production use.

By enforcing consistent data protection policies and providing comprehensive privacy reports and audit trails, Tonic.ai helps organizations demonstrate controlled data handling practices and reduce the complexity of compliance audits.

Data privacy teams, compliance leaders, legal stakeholders, and enterprise platform teams all use Tonic.ai to balance regulatory requirements with the need for fast, data-driven execution.

.svg)

.svg)

.avif)