The lastest

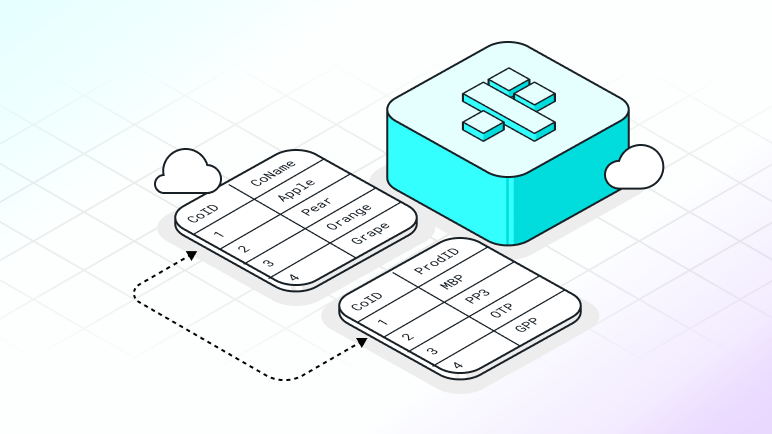

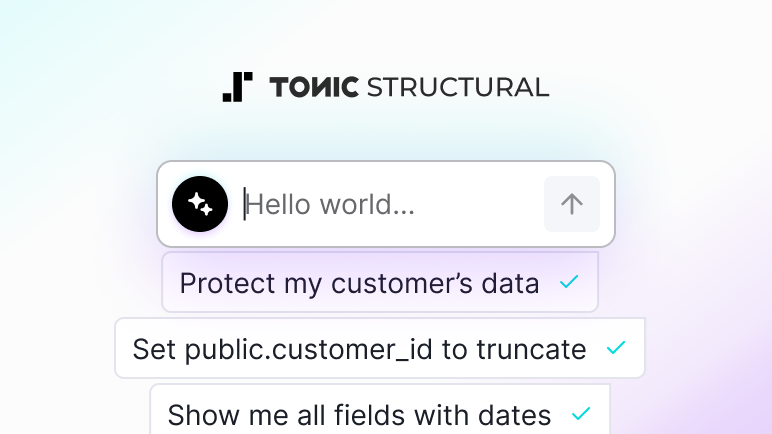

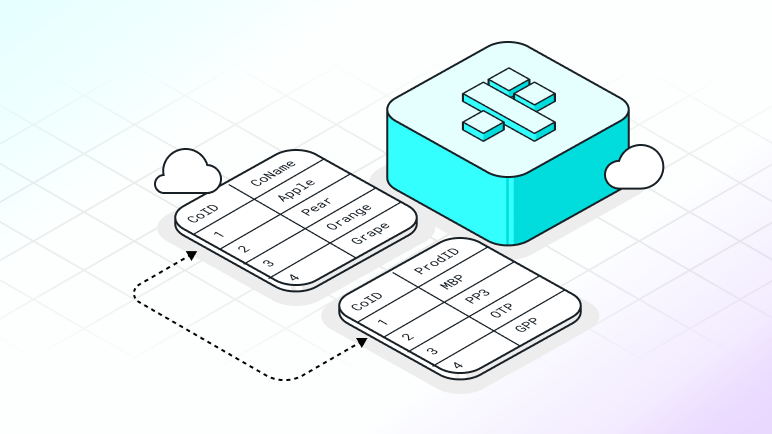

Generate referentially intact synthetic data across your entire data ecosystem

Your software runs on many interconnected systems. Now Fabricate generates referentially intact synthetic data across all of them, from a single conversation.

Your software runs on many interconnected systems. Now Fabricate generates referentially intact synthetic data across all of them, from a single conversation.